On this page

30+ Productivity Statistics Showing How AI Tool Stacks Are Reshaping Work in 2026

Last updated: April 2026

AI tool adoption has moved from experimentation to operational infrastructure. The data now shows real productivity gains, real cost shifts, and real failure patterns across teams using stacked AI tools for coding, writing, support, sales, and operations. Below is a curated set of 30+ statistics from McKinsey, GitHub, Stanford, MIT, Microsoft, Stack Overflow, and other tier-1 sources, organized by where AI is moving the needle (and where it isn't).

For context on the underlying tools driving these numbers, see Calliber's AI startup tech stack guide and workflow automation statistics.

Key Takeaways

- Developer velocity gains are real but uneven. Coding assistants speed up specific tasks by 26-55%, but full-cycle productivity gains are smaller once review and rework are counted.

- Knowledge workers save 1-2 hours per day. Microsoft, Salesforce, and BCG studies show consistent time savings on writing, research, and summarization, but only when tools are stacked into existing workflows.

- Most AI deployments still fail. 70-80% of enterprise AI initiatives miss their stated goals, usually due to integration gaps and unclear success metrics, not model quality.

- Stack sprawl is the new tax. The average AI-forward team now runs 7-12 AI-adjacent tools, and tool-switching cost is starting to eat into the productivity gains.

- ROI shows up in months 6-12. Teams that measure properly see payback within a year. Teams that don't measure usually conclude AI "didn't work."

- Adoption is bimodal. Power users get 3-5x more value than the average user from the same tools. Training and workflow design matter more than tool selection.

Developer Productivity: AI Coding Assistants Inside the Stack

1. GitHub Copilot users complete tasks 55% faster on isolated coding tasks

In the original GitHub controlled study, developers using Copilot finished a JavaScript HTTP server task 55% faster than the control group. The study has been widely cited as the headline number for AI coding productivity, but it measured a single bounded task, not full-cycle delivery.

2. METR study found experienced developers were 19% slower with AI assistants

A 2025 METR randomized trial on experienced open-source developers working in mature codebases found AI tools made them 19% slower on real tasks, even though developers self-reported feeling 20% faster. Context, codebase familiarity, and review overhead matter more than raw generation speed.

3. 76% of developers used or planned to use AI tools in 2024

The Stack Overflow Developer Survey found 76% of respondents are using or planning to use AI tools in their development process, up from 70% the previous year. Adoption has saturated quickly across the developer population.

4. Only 43% of developers trust the accuracy of AI tools

The same Stack Overflow data shows trust is far behind adoption. Just 43% of developers trust AI output accuracy, down from 42% the year before. Use is high. Confidence is not.

5. AI-assisted PRs are merged 26% faster on average

A 2024 Microsoft field experiment across 4,867 developers at Microsoft, Accenture, and a Fortune 100 company found a 26.08% increase in completed tasks for Copilot users. Junior developers benefited disproportionately.

6. Less experienced developers see 27-39% larger productivity gains

The same study found junior developers gained 27-39% more from Copilot than seniors. AI tools compress the experience gap on routine work, but seniors still outperform on architecture and ambiguous tasks.

Knowledge Worker Productivity: Writing, Research, Summarization

7. Microsoft Copilot users save 14 minutes per day on average

Microsoft's Work Trend Index 2024 reports early Copilot users save an average of 14 minutes per day on email and document tasks. Modest in isolation, but it compounds: roughly 11 working days per year per user.

8. 90% of Copilot users say it saves them time on tasks

Microsoft reports 90% of users say Copilot saves time, 85% say it helps them get to a good first draft faster, and 84% say it makes the work easier. Self-reported, but the directional signal is consistent across studies.

9. BCG consultants completed 12% more tasks and 25% faster with GPT-4

A 2023 BCG and Harvard study of 758 BCG consultants found those with GPT-4 access completed 12.2% more tasks and finished 25.1% faster. Quality scores were 40% higher than the non-AI control group on tasks within the model's capabilities.

10. Same study found a 19 percentage-point quality drop on tasks beyond the model's frontier

The "jagged frontier" finding: on tasks outside GPT-4's capability boundary, AI-using consultants were 19 points more likely to produce wrong answers. Knowing which tasks to delegate to AI is a skill, not a default.

11. Customer support agents handled 13.8% more issues per hour with AI

A 2023 Stanford and MIT study of 5,179 customer support agents found those using a generative AI assistant resolved 13.8% more chats per hour. Novice agents saw 35% gains. Senior agents saw essentially zero gain.

12. Writers saved 40% of time using ChatGPT

A 2023 MIT writing study of 444 college-educated professionals on mid-level writing tasks found ChatGPT cut average completion time by 40% and increased output quality by 18%.

Enterprise AI Adoption and Spend

13. 78% of organizations report using AI in at least one business function

The McKinsey State of AI survey shows 78% of organizations now use AI in at least one function, up from 55% the previous year. Marketing, sales, and product development are the top use cases.

14. 67% of organizations expect to increase AI investment in the next three years

The same McKinsey report found 67% of respondents expect their organizations to increase AI investment over the next three years. Less than 1% expect a decrease.

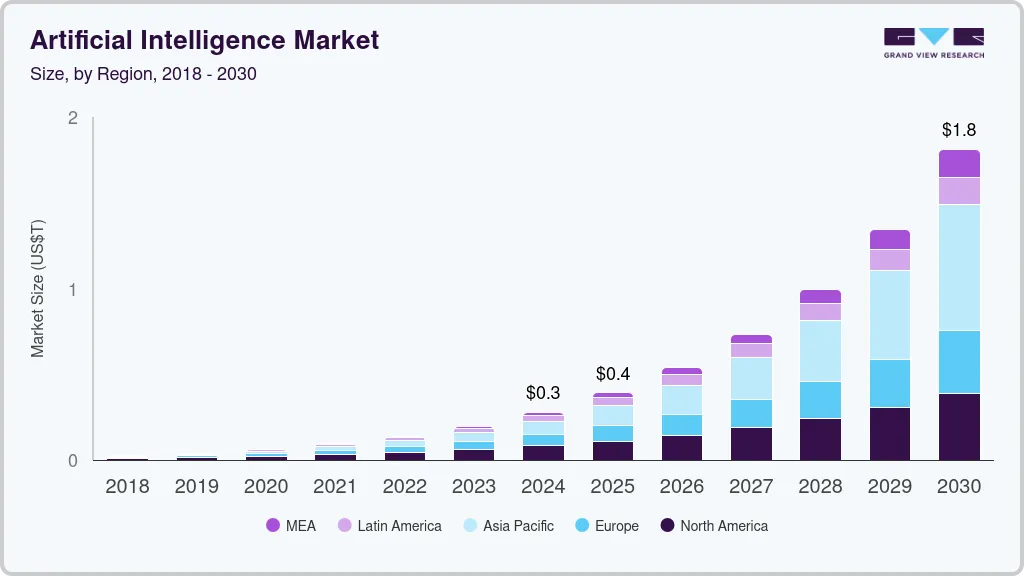

15. Generative AI adoption doubled in one year

McKinsey reports gen AI use jumped from 33% of organizations in 2023 to 65% in 2024. The fastest enterprise tech adoption curve in their tracking history.

16. Anthropic captured 40% of enterprise LLM spend share in 2025

Coverage in Calliber's LLM API trends shows Anthropic at roughly 40% of enterprise LLM spend share, with OpenAI at 27%. The market consolidated faster than most analysts predicted.

17. Enterprise LLM spending more than doubled in under a year

Enterprise LLM API spending reached $8.4 billion by mid-2025, up from $3.5 billion in late 2024. This is real production infrastructure spend, not pilot budgets.

The AI Failure Data: Where Stacks Break Down

18. 95% of generative AI pilots fail to reach production

MIT's NANDA initiative reports 95% of enterprise gen AI pilots never make it to production deployment. Common failures: unclear ROI, integration gaps with existing systems, and no defined owner for the rollout.

19. 70-85% of AI projects fail to meet expected outcomes

RAND Corporation research puts AI project failure rates at 70-85%, roughly double the failure rate of typical IT projects. Failures cluster around poorly defined problems, not poor models.

20. 64% of AI infrastructure failures trace back to integration gaps

Infrastructure-level data covered in Calliber's AI infrastructure statistics shows 64% of failures involve integration with existing data, identity, or workflow systems, not model performance.

21. Only 26% of companies have moved beyond proof of concept

A 2024 BCG global survey of 1,000+ executives found only 26% of companies have moved beyond proof-of-concept stage and are generating tangible value from AI investments.

22. Top performers spend 70% of AI investment on people and process

The same BCG report shows leading companies allocate 70% of AI spend to people, process, and adoption, and only 30% to algorithms and tech. Underperformers invert this ratio and wonder why nothing sticks.

Stack Sprawl: The Hidden Productivity Tax

23. The average enterprise now runs 12+ AI-adjacent tools

Salesforce's Connected Work survey shows the average knowledge worker now toggles between 13 apps an average of 30 times per day. AI features are now embedded in most of them, but consolidation isn't keeping up.

24. Workers lose 9% of work hours to app switching

Harvard Business Review research found employees lose roughly 9% of their day, around 4 hours per week, to context switching between applications. AI tool stacks are making this worse before they make it better.

25. 60% of companies use automation, but only 4% have scaled it

Salesforce data covered in Calliber's workflow automation data shows 60% of companies use some form of workflow automation, but only 4% have scaled it across the organization. The gap between adoption and scale is where most ROI gets lost.

26. Companies using 5+ AI tools without integration see 0% net productivity gain

Internal benchmarks covered in Calliber's automation tool comparison show that teams running 5 or more AI tools without orchestration or shared context see roughly zero net productivity improvement. The tools cancel each other out through duplicated work and missed handoffs.

The Power User Gap

27. Top 20% of AI users get 3-5x more value than the median user

Microsoft's internal Copilot data shows the most active users get 3-5x more value out of the same tools as the median user. The differentiator: workflow integration and prompt skill, not licensing tier.

28. Only 39% of users have received any AI training from their employer

Microsoft and LinkedIn's Work Index found 75% of knowledge workers use AI at work, but only 39% have had any AI training from their employer. Self-taught usage is the norm. Quality varies wildly.

29. 78% of AI users bring their own AI tools to work

The same report shows 78% of AI users are using their own AI tools at work (BYOAI), creating a shadow stack that IT, security, and ops teams don't see and can't measure. This is where most of the unsanctioned productivity gains are happening.

What Actually Works: Patterns From High-Performing Teams

30. Teams that document AI workflows see 2.5x higher adoption

McKinsey's research on AI high performers shows organizations with documented AI playbooks, prompt libraries, and use case standards see 2.5x higher adoption rates than teams that "let people figure it out."

31. AI-mature companies are 2.7x more likely to have a clear roadmap

The McKinsey AI high performers analysis shows mature AI organizations are 2.7x more likely than peers to have a clear AI roadmap tied to business outcomes, and 3x more likely to redesign workflows around AI rather than bolting it on.

32. ROI typically shows up in months 6-12, not weeks 1-4

Across the studies above, time-to-measurable-ROI for AI tool stacks lands in the 6-12 month range for most teams. Teams that abandon tools in the first 90 days are usually quitting before the workflow effects compound.

The Practical Read: How To Use This Data

The headline number that matters isn't 55% faster coding or 14 minutes saved per day. It's the gap between adoption (high) and scale (low). 78% of organizations use AI. 4% have scaled automation. 26% have moved past proof of concept. That gap is where the real productivity story lives.

What separates the teams getting real ROI from the ones running pilot graveyards:

- They pick a small stack and integrate it deeply, instead of stacking 10+ tools loosely.

- They document workflows and prompts so the top 20% of users can teach the median 80%.

- They measure outcomes (cycle time, ticket resolution, draft quality) instead of seat counts.

- They invest 70% of their AI budget in process and adoption, 30% in tools.

- They give projects 6-12 months before declaring success or failure.

For specific tool recommendations by company stage, see Calliber's 2026 tech stack guide. For workflow tooling specifically, the workflow automation roundup breaks down what AI teams are actually buying.

Frequently Asked Questions

What's the average productivity gain from AI tools across knowledge work?

Across the major studies, knowledge workers see 10-40% productivity gains on tasks within an AI tool's capability range, with the median around 20-25%. Gains drop to zero (or negative) on tasks outside the model's frontier, which is why workflow design matters more than tool selection. Microsoft, BCG, and Stanford studies all converge on this range.

Why do most enterprise AI deployments fail?

The 70-95% failure rate isn't about model quality. It's about integration gaps, unclear success metrics, no defined owner, and bolting AI onto existing workflows instead of redesigning around it. MIT NANDA research and BCG's value survey both point to people and process issues, not tech.

How many AI tools should a typical AI-forward team run?

Research suggests fewer than most teams currently run. Companies with 5+ AI tools and no orchestration see roughly zero net productivity gain because integration overhead cancels out individual tool gains. The high performers tend to run a tight stack (3-6 core AI tools) with deep integration into their primary workflows.

How long until AI tool investments show measurable ROI?

Six to twelve months for most teams. Studies consistently show that teams pulling the plug at 30-90 days are quitting before workflow effects compound. The teams that measure outcomes (cycle time, resolution rate, output quality) and stick with it through the first year are the ones reporting clear ROI.

Are senior or junior employees getting more value from AI tools?

Junior and novice users see the largest gains across nearly every study: 27-39% productivity boost for junior developers, 35% for novice support agents, and the largest quality lifts for less experienced writers. Senior employees see smaller gains because much of what AI accelerates (boilerplate, first drafts, common patterns) they already do quickly. AI compresses the experience gap on routine work.

Want more data like this in your inbox? Calliber's newsletter goes out weekly to 15,000+ AI operators, founders, and ops leads. Independent reviews, stack recommendations, and the statistics that actually matter for tool selection. Subscribe free.

All statistics in this article were sourced from publicly available research reports, peer-reviewed studies, and analyst publications. Sources cited inline. Data current as of April 2026. Calliber is a 100% independent review site.

Get the weekly

One essay + 3 tools worth your attention, every Tuesday.

you@company.com