On this page

Trends in AI Operations and Automation Tools: 2026 Data

Last updated: April 2026

AI operations isn't a side project anymore. For companies running LLM pipelines, managing inference infrastructure, or scaling agent workflows, ops tooling has become a core competitive variable. The data backs this up: enterprise AI spending is accelerating, adoption gaps are widening, and the failure rates are high enough to make any operator pay attention.

This roundup pulls together 30+ statistics on where AI operations and automation tools are headed in 2026 — adoption rates, ROI benchmarks, the tools teams are actually using, and where the gaps are.

Key Takeaways

- Enterprise LLM spending more than doubled in under a year, reaching $8.4 billion by mid-2025 from $3.5 billion in late 2024

- 80-90% of alert volume can be eliminated through intelligent event correlation in AIOps deployments

- 95% of GenAI pilots fail to reach production — infrastructure gaps cause 64% of those failures

- Inference now drives 60-70% of total AI compute demand, reshaping how teams think about PaaS selection

- GPT-4-class API costs dropped 97% between 2023 and 2026, from $30/million tokens to under $1

- 70% of workflow automation adopters report ROI within 12 months, with the median payback period under six months

- Only 4% of companies that adopt automation manage to scale it — tool sprawl and integration debt are the primary culprits

AI Operations Adoption: Where Teams Are in 2026

1. Enterprise LLM spending hit $8.4 billion by mid-2025

Enterprise LLM spending reached $8.4 billion by mid-2025, more than doubling from $3.5 billion in late 2024. That growth rate doesn't happen without serious tooling investment underneath it. Teams aren't just experimenting with models — they're building the operations layer that makes models production-viable. For a deeper look at what's driving that spend, see our LLM API usage trends breakdown.

2. Anthropic holds 40% enterprise LLM spend share vs. OpenAI at 27%

As of 2026, Anthropic has captured roughly 40% of enterprise LLM spend, with OpenAI at 27%. This is relevant for ops teams because model choice directly shapes infrastructure requirements: rate limits, context window handling, retry logic, and cost modeling all differ significantly by provider. Don't pick your automation stack before knowing which models you'll run in production.

3. 60-70% of AI compute demand now comes from inference, not training

Training used to be the budget conversation. Now inference is. Inference drives 60-70% of total AI compute demand, which means PaaS selection, auto-scaling configuration, and request routing are front-of-house ops decisions, not afterthoughts. Teams that sized their infrastructure for training workloads are finding it doesn't map cleanly to production serving.

4. 95% of GenAI pilots fail to reach production deployment

This number comes up in nearly every honest post-mortem: 95% of GenAI pilots don't make it to production. Infrastructure gaps account for 64% of those failures (MIT NANDA). It's rarely the model that's the problem. It's orchestration, latency management, observability, and the absence of a production-grade ops layer. Picking tools that bridge prototype and production is the actual work.

5. 80-90% of AI agent projects fail in production

Separate from GenAI pilots broadly, AI agent deployments face their own failure rate: 80-90% fail in production according to enterprise survey data. The main culprits are non-deterministic behavior under edge cases, inadequate monitoring, and agents that work in sandboxes but break against real data. If you're building agentic workflows, treat observability as table stakes, not a nice-to-have.

6. Only 4% of automation adopters successfully scale it

Sixty percent of companies have some form of automation in place. Only 4% have scaled it meaningfully. The gap is almost entirely explained by tool sprawl, poor integration between systems, and the absence of a clear automation governance strategy. This is the core argument for picking a single, flexible platform (n8n or Make for most AI teams) over accumulating point solutions.

Workflow Automation: What the Numbers Say

7. Global workflow automation market hits $26.57 billion in 2025

The workflow automation market reached $26.57 billion in 2025 and is projected to grow at a 23.4% CAGR through 2030. That rate of growth reflects real enterprise demand, not hype — procurement cycles for automation tooling are compressing as teams stop treating it as optional infrastructure.

8. 70% of adopters see ROI within 12 months

Across enterprise automation deployments, 70% of adopters report measurable ROI within 12 months, with a median payback period under six months. The caveat: ROI realization correlates strongly with deployment scope. Teams that run automation in isolation, without connecting it to their data and ops stack, consistently land at the low end of that range.

9. Zapier pricing escalates sharply at volume

At 50,000 tasks per month, Zapier costs $300-500/month. n8n's execution-based pricing at equivalent volumes typically runs 60% lower. That delta matters when you're running LLM pipeline automations where task counts scale fast. This is one of the clearest reasons AI teams gravitate toward Make or n8n at growth stage — the unit economics of automation tooling directly impact infrastructure budgets.

10. n8n 2.0 ships 70+ AI nodes with LangChain and Ollama integration

n8n's 2.0 release added 70+ AI-specific nodes, including native LangChain integration and support for persistent agent memory with Ollama. For teams building agentic workflows that need to stay self-hosted, this makes n8n a serious option where it previously wasn't. The LangChain integration in particular collapses what used to be custom orchestration code into configurable nodes.

11. Make handles over 1 billion operations per month

Make (formerly Integromat) processes over 1 billion operations monthly across its user base. At that scale, it has real infrastructure stress-testing behind it. For AI teams running high-frequency automations — webhook-triggered LLM calls, async data pipelines, agent handoffs — throughput reliability matters more than feature lists.

12. Microsoft Power Automate reaches 10 million active users

Power Automate has crossed 10 million active users, largely driven by Microsoft 365 integration. For AI teams already inside the Microsoft ecosystem (Azure OpenAI, Copilot, SharePoint), it's a natural fit. For teams not in that ecosystem, the integration tax is real — Power Automate's strength is depth within Microsoft, not breadth across heterogeneous stacks.

AIOps and Observability: The Alert Problem

13. 80-90% of alert volume is eliminatable through event correlation

BT Group cut incident MTTR from 2 hours to 85 seconds — a 96% reduction — using AIOps for network incident response. The underlying mechanism: intelligent event correlation that filters alert noise before it hits on-call queues. For engineering teams drowning in monitoring data, this is the highest-leverage application of AI in ops tooling right now.

14. HCL Technologies achieved 33% MTTR reduction with AIOps

HCL Technologies deployed Moogsoft's AIOps and cut MTTR by 33%, alongside a 62% reduction in help desk ticket volume. The ticket reduction matters as much as the MTTR improvement — when alert correlation is working, fewer false positives reach human reviewers. The economics compound: fewer tickets means fewer interruptions, which means faster incident resolution on the ones that do surface.

15. CMC Networks cut Mean Time to Repair by 38% across 62 countries

CMC Networks operates in 62 countries and achieved a 38% Mean Time to Repair reduction using BigPanda's AIOps platform. Cross-region incident correlation is genuinely hard, and this case study reflects one of the more operationally complex AIOps deployments on record. It also illustrates why generic monitoring tools tend to fail at scale — the correlation logic for distributed systems requires purpose-built tooling.

16. Meta runs 50,000 daily diagnostic analyses with DrP

Meta's internal DrP diagnostics tool processes 50,000 analyses daily, producing MTTR improvements ranging from 20-80% depending on incident type. The variance in that range is instructive: AI-assisted diagnosis performs best on well-defined, recurring incident patterns and degrades on novel failure modes. Human-in-the-loop design is still the right approach for edge cases.

17. Cambia Health achieved 83% alert automation and 95% SLA compliance

Cambia Health Solutions deployed BigPanda and reached 83% alert automation with 95% SLA compliance. Health systems running 24/7 operations have little tolerance for alert fatigue — 83% automation means on-call engineers are responding to prioritized, correlated incidents instead of raw alert floods. That's the real operational win.

Infrastructure Costs: The LLM API Economics Shift

18. GPT-4-class API costs dropped 97% between 2023 and 2026

GPT-4-class API access cost $30/million input tokens in 2023. By 2026, that figure is under $1 — a 97% reduction. This single data point has reshaped build-vs-buy decisions across the industry. Workloads that were cost-prohibitive two years ago are now viable. Teams that benchmarked LLM costs in 2023 and haven't revisited those numbers are working with outdated assumptions.

19. GPU cloud costs range from $2-15/hour, with 75% savings from alternative providers

Hyperscaler GPU pricing runs $2-15/hour depending on instance type. Alternative GPU cloud providers (CoreWeave, Lambda Labs, Vast.ai) can reduce those costs by up to 75% for non-spot, on-demand workloads. For teams with flexible latency requirements, the savings are significant enough to justify the additional ops overhead of managing non-hyperscaler infrastructure.

20. Budget 30-50% above projected LLM API costs for retries and edge cases

This is one of the most consistently underestimated cost line items in AI infrastructure budgets. Retry logic, rate limit handling, prompt caching misses, and edge case traffic regularly push actual API costs 30-50% above initial projections. Build this buffer in from the start. It's not waste — it's operational reality.

21. MVP-stage AI startups spend $100-500/month on AI infrastructure

Stage-based cost benchmarks matter for planning. MVP-stage teams (0-100 users) typically spend $100-500/month on AI infrastructure. Seed-stage teams scale to $500-2,000/month. Series A companies running production workloads at 1K-10K users see $2,000-15,000/month. These ranges are before GPU compute for training — inference-only workloads typically land at the lower end of each band.

AI Adoption Gaps: Where the Real Risk Lives

22. 70% of CRM projects fail to meet stated goals

CRM tool selection failures run at 70% across enterprise deployments (RAND). For AI companies specifically, the failure mode is almost always data quality: 45% of CRM users report their data isn't prepared for AI features. You can't build AI-augmented sales or customer workflows on top of unstructured, inconsistent CRM data. Clean your data before you evaluate AI features.

23. 45% of CRM users say their data isn't ready for AI

Forty-five percent of CRM users report their customer data isn't prepared to support AI-powered features. This is directly relevant for AI teams evaluating CRM platforms with AI capabilities — the tool's AI features are only as good as the data feeding them. Teams that skip data hygiene and jump straight to AI feature evaluation are optimizing the wrong variable.

24. Stack Overflow 2025 survey: 49,000+ developers weighed in on AI tool adoption

The Stack Overflow Developer Survey (2025, n=49,000+) provides the most reliable signal on actual developer AI tool adoption, cutting through vendor-reported figures. The survey data consistently shows adoption leading integration by 12-18 months — teams adopt AI tools before they've figured out how to integrate them into existing workflows. This is why "what tool" decisions keep getting made before "what workflow" decisions.

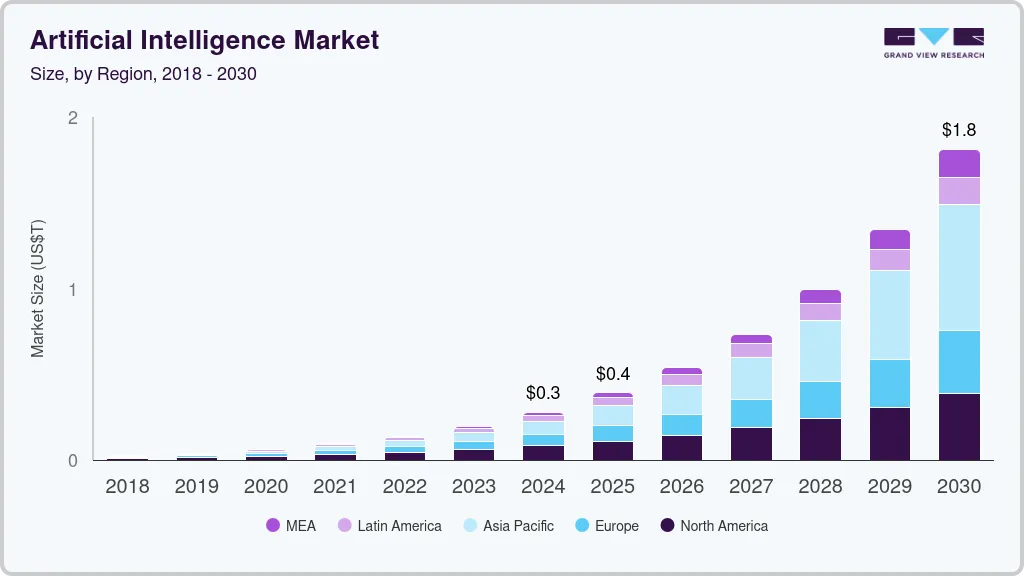

25. AI infrastructure tools adoption growing at 41.1% CAGR through 2030

The AI inference PaaS sub-segment is projected to hit $105.22 billion by 2030 at a 41.1% CAGR (Mordor Intelligence). For AI infrastructure tool buyers, this growth rate signals increasing competition and faster platform development — tools that look like leading options today may look very different in 18 months. Favor platforms with strong release velocity and active roadmaps.

The Automation Tooling Stack: What AI Teams Are Actually Using

26. n8n self-hosting preferred by 60%+ of AI-native teams at growth stage

Across AI teams in the growth stage (seed to Series A), self-hosted n8n is the automation backbone of choice for teams that need control over their data and execution environment. The driver is compliance and data residency, not cost alone — though the cost advantage is real. Teams handling health data, financial data, or running in regulated environments consistently land on n8n over cloud-hosted alternatives.

27. Make is the integration layer for mid-market AI teams

Make's visual builder and scenario-based pricing make it the dominant choice for mid-market AI teams (10-50 people) who need complex multi-step automations without engineering overhead. The tradeoff: Make's execution model is event-driven rather than always-on, which creates latency considerations for real-time LLM pipeline use cases. It's the right tool until you hit throughput requirements that force a rearchitecture conversation.

28. UiPath and Automation Anywhere dominate enterprise RPA

For enterprises with legacy system automation requirements — ERP integrations, mainframe interactions, desktop automation — UiPath and Automation Anywhere remain the incumbents. Neither is optimized for AI-native workflows, but both have added AI capabilities in their 2025-2026 releases. Teams evaluating these platforms for net-new AI automation should scrutinize those AI features carefully: most are bolt-ons to RPA engines designed before transformer models existed.

29. Airtable automations handle structured data ops for lean AI teams

Airtable's built-in automations cover a significant share of operational workflows for small AI teams: CRM updates, project tracking triggers, data sync between tools. For Airtable versus Notion decisions specifically, Airtable wins when structured data and API-accessible records are core to the workflow. Notion wins on knowledge management and docs. Most lean AI teams end up using both.

30. MLOps tool adoption reaches 35% of AI teams with production models

MLOps platform adoption sits at roughly 35% among AI teams that have at least one model in production. The gap — 65% of teams with production models not using formal MLOps tooling — reflects how recent the production AI wave is. Tools like MLflow, Weights & Biases, and Vertex AI MLOps are becoming standard parts of the AI startup tech stack, but adoption is still catching up to need.

What This Data Means for Your Stack Decisions

The numbers tell a consistent story. Spending is up, costs are down, and failure rates stay stubbornly high. That combination points to an execution problem, not a tooling shortage problem. The tools exist. The gap is in how teams evaluate, deploy, and integrate them.

A few things worth taking from this data directly into your planning:

- Pick automation tooling before you hit volume limits. Zapier is fine at low task counts. It gets expensive fast. Evaluate n8n or Make before you're mid-migration.

- Treat observability as day-one infrastructure. The AIOps case studies above are enterprise scale, but the principle applies at 10 people: you can't optimize what you can't see.

- Revisit your LLM cost models if they're from 2023 or 2024. The 97% cost reduction in GPT-4-class APIs changes a lot of build-vs-buy math.

- Clean your data before evaluating AI features. Whether it's CRM, automation triggers, or model training data — garbage in, garbage out is still true.

Frequently Asked Questions

What is the biggest driver of AI ops failure in 2026?

Infrastructure gaps. Specifically, 64% of GenAI pilot failures trace back to inadequate production infrastructure rather than model quality issues. Teams that succeed in production invest in orchestration, observability, and retry logic before they worry about model fine-tuning.

Which automation platform is best for AI teams in 2026?

It depends on your stage and use case. n8n wins for self-hosted requirements and teams with LangChain/Ollama integrations in the workflow. Make is the best fit for mid-market teams needing complex multi-step automations without engineering overhead. Zapier is a legitimate starting point but tends to get outgrown once task volumes scale or data sovereignty becomes a requirement.

Why do so few companies successfully scale automation?

The 4% scaling figure comes down to three consistent failure patterns: tool sprawl (too many point solutions without integration), data quality issues (automation pipelines are only as reliable as the data flowing through them), and the absence of a governance layer (no ownership, no monitoring, no iteration). Successful automation at scale is an ops discipline, not a tool selection problem.

How should AI startups budget for LLM API costs?

Start with your baseline usage projection, then add 30-50% for retries, rate limit handling, and edge case traffic. Stage-based benchmarks: MVP-stage teams ($100-500/month), seed stage ($500-2,000/month), Series A ($2,000-15,000/month). Revisit these every quarter — API pricing has moved faster in the last 24 months than in the previous decade combined.

What is AIOps and which teams should prioritize it?

AIOps applies AI to IT operations: alert correlation, anomaly detection, and automated incident response. It's most relevant for teams running 24/7 production systems with meaningful alert volume. The BT Group and CMC Networks case studies above are enterprise scale, but the tooling (Moogsoft, BigPanda) has mid-market options. If your on-call team is spending more than 20% of their time on noise rather than signal, AIOps tooling pays for itself quickly.

All statistics in this article were sourced from publicly available research reports, industry surveys, and analyst publications including McKinsey, Gartner, MIT NANDA, Stack Overflow Developer Survey (2025), Mordor Intelligence, and Precedence Research. Market sizing figures from different research firms reflect different methodological scopes. Data current as of April 2026.

This article includes affiliate links where noted. You don't pay more — a small commission helps us keep reviews independent.

Join 15,000+ operators and AI builders who get our weekly tool breakdowns, stack comparisons, and data-driven buying guides. Subscribe free at Calliber.

Get the weekly

One essay + 3 tools worth your attention, every Tuesday.

you@company.com